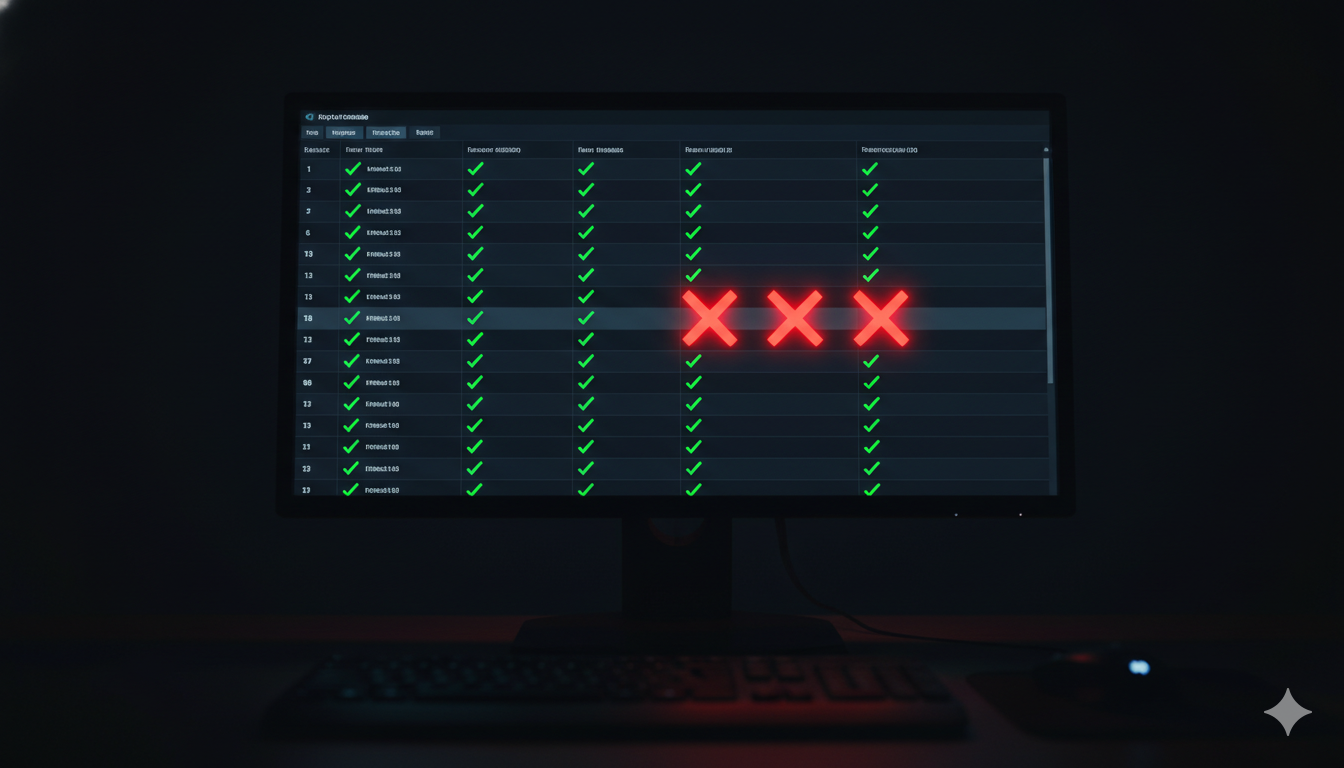

I once helped ship a production incident because three tests were flaky.

We were building a virtual currency feature at a gaming startup. We had an API in front of the database doing lock transactions on currency upgrades. Three tests in that area had started failing intermittently - you'd get a failure on CI, re-run it, and they'd almost always pass. We debugged them multiple times. We couldn't find the root cause. We were under deadline pressure. And eventually we did what teams do: we stopped really looking at those three failures. "Oh, it's those same tests. They always pass on re-run."

Then I put up another PR. My teammate reviewed it. "Yep, same three failures as usual." We merged.

Ten minutes later, pagers started going off. It turned out there were four failures, not three. But because we'd pattern-matched on "those three flaky tests," both of us short-circuited. We missed the fourth. We'd broken the ability to do database transactions entirely. We lost ten minutes of real user transactions in production.

I wrote those tests. It was my change. My teammate is sharp. We both missed it.

An unreliable test is worse than no test at all.

Here's why. When a bug reaches production in an area with no test coverage, you explain to the postmortem audience that you triaged incorrectly and didn't cover it - you'll get it next time. When a bug reaches production in an area that had test coverage, you have to explain that the tests existed, they were failing, and you shipped anyway. One implies bad prioritization. The other implies you spent engineering resources building something you then trained yourself to ignore. That's worse.

What This Looks Like When Agents Are Writing Code

Now add AI agents to the picture.

When a flaky test fails, a human developer sighs, gets coffee, and re-runs the build. When a flaky test fails on an AI agent, the agent tries to fix the code to make the test pass. It doesn't know the test is flaky. It doesn't know the test has failed on this area before. It sees a failing test, it writes code to make it green, and it moves on.

Now you have a perfectly green test suite validating completely broken behavior - at whatever speed the agent works, which is considerably faster than human-pace. The two-week slow burn I described above plays out in an afternoon.

This is why I have become genuinely unreasonable about flaky tests. The first time I see a test failing more than once on the same commit SHA, I turn it off. I don't ask questions. I give the developer 24 hours to fix it; after that I open a bug and quarantine the test. They can uncomment it when they've fixed it, and the bug and the test stay coupled - when one closes, the other has to close too.

Once one flaky test is tolerated, the rest sneak in. It's like the dishes at the office - one person leaves a cup in the sink, and two weeks later the sink is full. You have to be the person who puts the cup in the dishwasher every single time, or the default becomes something worse.

The Three Principles

Tests that belong in a CI pipeline and should run on every change have three traits: they are fast, they are reliable, and they are specific. All three. Not two out of three.

Fast means actionable results as quickly as possible. Your smoke tests - the ones that tell you the service is up and core flows work - run first. If you're failing a health check, you don't need to know what happened in your UI test suite. Fail fast, fail loud, give developers information they can act on immediately.

My unit test suite covers about 1.5 million lines of code and nearly 100,000 test cases. It runs in six minutes. Microseconds per test, not milliseconds. The moment you hit a network call, you've crossed the millisecond line. Unit tests don't leave the box - no network, no filesystem if you can avoid it, no calls across service boundaries. They're cheap because they're contained. Run them as many times as you want.

Reliable means one outcome, every time, on the same commit. A test that passes 99% of the time fails roughly once a day if you're running it daily. In a high-velocity pipeline, it fails every hundred PRs. That's not reliable - that's a liability dressed up as coverage.

My practical threshold: if a re-run changes the result, the test is flaky and it goes into quarantine. No appeals for "probably a network blip." Probably isn't good enough.

Specific means each test has one thing it's checking, one clear failure mode, and a name that tells you exactly what went wrong when it fails. A test named LoginHappyPath that goes through login, uploads a photo, views it, edits a label, and checks session state is five tests in a trench coat. It's flaky by construction because five different systems have to cooperate for it to pass. Break it up.

Naming matters more than most people admit. Test_123 requires you to read the code to understand the failure. Login_BadPassword_Returns401 is the spec and the failure message in one. I'd rather have test names that are slightly too long than names that are opaque.

The 1% Coverage Rule

I used to require 70% code coverage. My developers' second favorite hobby became arguing about why their PR at 69% was actually fine. What counts as a covered line? What about generated code? What about this one edge case that would take two weeks to properly test?

I changed the rule. I now require 1% coverage.

What 1% actually means is: did you test anything? At all? Because nobody - not the developer, not their manager, not anyone else in the room - can make a credible argument that 1% is too high a bar. It converts the question from an argument about percentages to a binary: did you test it, or didn't you?

And because testing something is their responsibility, developers end up testing more than the bare minimum. We consistently land between 70 and 80% anyway - which is the right range for most production codebases. I never have to have the 69% argument again.

How to Structure a Suite That Survives High-Velocity Development

Think of your test suites as concentric circles.

The innermost ring is your smoke tests: minimum viable product, core functionality, the things that have to work for anything else to be testable. These run on every single build, no exceptions. They must be bulletproof and fast. If your smoke suite contains a flaky test, that test is the most dangerous piece of code in your repository.

The next ring is regression: everything your customers actually pay you for. These run on most builds. If they're slow enough to be painful, the fix is to run relevant tests based on what changed - not to run them less often.

The outer rings - security scans, performance tests, visual validation, dependency audits - run selectively. If you haven't touched authentication, don't run your security suite. If nothing changed in your data layer, don't run your performance suite. Machine time is cheap compared to human time, but that's not a license to run everything on everything. Match the test scope to the change scope.

One firm pipeline rule: if a build fails a smoke test, stop. Don't run the regression suite. Don't run the outer rings. Something is broken, a code change is coming, and everything after the smoke failure is suspect. You want to know in five minutes, not after 90.

Test Architecture Is Still Your Job

Even if you're using AI to write test cases - and I do - the architecture is yours.

AI generates tests. It generates them based on what the average of the internet thinks good tests look like. The average of the internet will give you tests that chase coverage percentage, tests that validate the implementation instead of the behavior, and tests that duplicate coverage you already have while leaving real gaps untouched. The AI doesn't know which flows are your smoke tests. It doesn't know which service boundaries are most likely to break under load. It doesn't know that your currency transaction code is the code where flakiness will cost you real money.

You have to define what good looks like. That means deciding the architecture, naming the suites, drawing the lines between unit and integration and smoke and regression. The AI can fill in test cases once you've done that work. The structure has to come from someone who knows the system - someone who has read the postmortem, or written it.

The test principles here aren't just still relevant in the age of agentic development. Given the speed at which agents generate and iterate on code, they are more important than ever. The velocity is higher, the feedback loops are tighter, and the cost of tolerated flakiness shows up faster. None of that changes what makes a test good. It just raises the stakes for getting it right.

This is post 4 of 7 in The Boring Parts Matter: Engineering Fundamentals for the Agentic Era.