I have one rule for every AI coding session: we are not done until the build compiles and all tests pass.

Not "I'll clean it up later." Not "the test is probably flaky, let's just push." Not "CI is being slow today, I'll check it tomorrow." The session ends when CI is green. Full stop.

This sounds obvious. It is not obvious in practice, especially when you're twenty minutes into a session that's gone off the rails and the agent is confidently explaining why the failing test is wrong and the code is right. The rule exists precisely for those moments. When I'm tired and the agent sounds convincing and the deadline is close, the rule is the only thing standing between me and a production incident.

The reason for the rule is simple: CI is not just a build system. In an agentic workflow, it's the primary communication channel between what the agent produces and what your engineering standards require. If the agent can't read CI feedback, understand what failed, and fix it without a human translating, then the agent isn't really autonomous -- it's just an expensive typing assistant.

What CI Feedback Looks Like to an Agent

A senior engineer navigating CI failure has years of context. She knows that a NullPointerException in AuthService.java during the integration test run usually means the test database didn't seed correctly, and the real fix is to re-run with --clean-db. She knows that the lint warning about line length can be ignored until after the logic is right. She knows that the security scan flags this particular library every time and the team has accepted the risk.

None of that is written down. It lives in her head, assembled from months of accumulated experience.

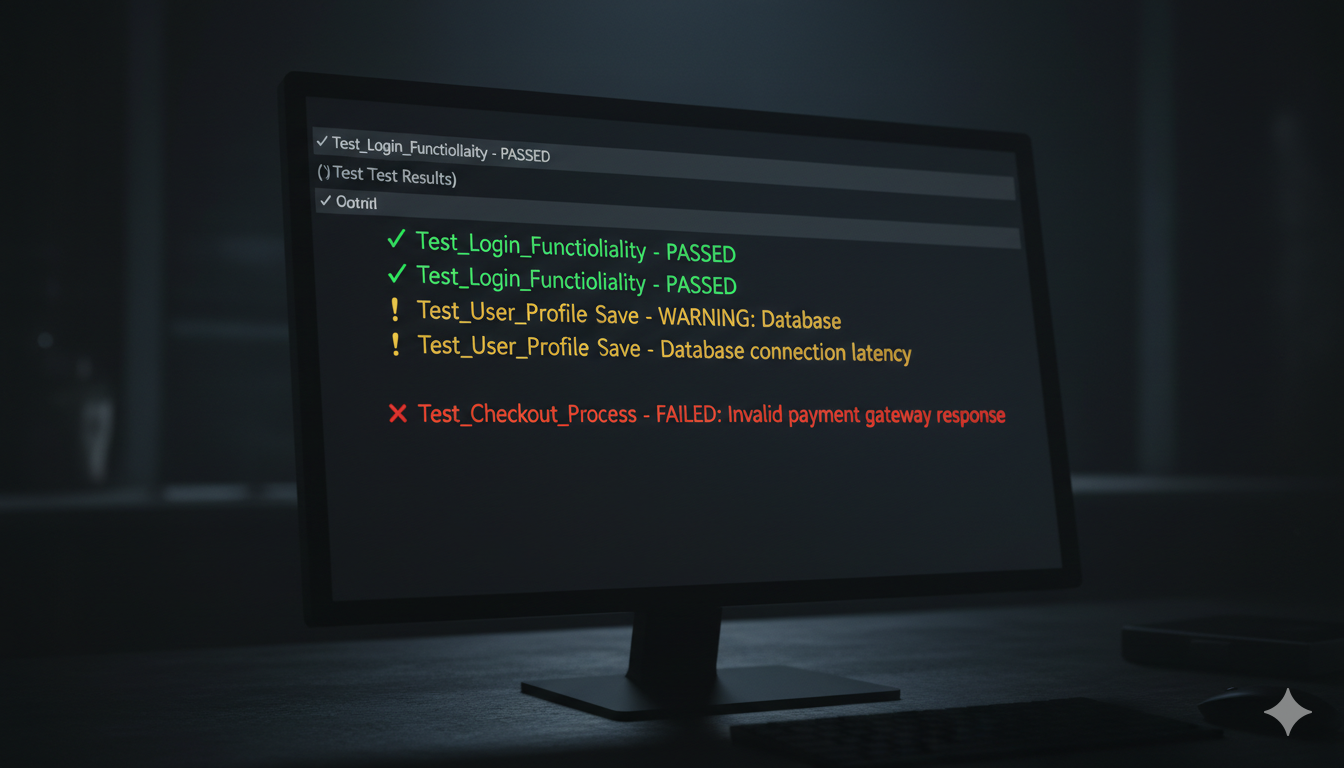

An agent has none of it. When CI fails, the agent sees the failure message -- and exactly the failure message. If the message is clear and specific, the agent has a real chance of self-correcting. If the message is "build failed, see logs," the agent has to either guess or ask a human to go read the logs and translate.

This is the gap most teams haven't closed yet. They built their CI pipelines for humans who have context. Then they added agents who don't.

What Makes CI Agent-Readable

The criteria for agent-readable CI overlap with -- but are stricter than -- the criteria for human-friendly CI.

Fast. Feedback in minutes, not hours. An agent waiting two hours for a CI result is an agent blocking a human for two hours. This isn't new advice, but the stakes are higher with agents because there's no "I'll check back on it after lunch" -- the session stalls. Smoke tests first, fail fast, don't run the full suite if the first gate already failed.

Specific. The error message should identify exactly what failed, in exactly what file or service, with enough context to act on it without diving into logs. "Tests failed" is useless. "5 tests failed in UserAuthTest, all related to BadPassword scenarios, stack trace attached" is actionable. Agents are very good at acting on specific errors. They are very bad at navigating vague ones.

Consolidated. One place. Not "check the build status in CircleCI, the test results in the GitHub Actions tab, the security scan in Snyk, and the lint output in the PR comments." An agent that has to navigate multiple systems to understand why a PR failed is going to miss things, just like a junior developer would. Every check that matters should surface in the PR review interface, clearly labeled, in a format the agent can read in one pass.

Deterministic. Every check that runs in CI should produce the same result on the same code. This is the flaky test problem applied to the entire pipeline -- a CI system that sometimes fails and sometimes passes on the same commit is one an agent cannot trust. If the agent can't trust the feedback, it will either retry until it gets a green, or ask a human every time, or worse, start ignoring failures it has seen before.

There's a fourth requirement that doesn't get its own word but matters as much as any of the above: every check needs to be decidable. A definitive yes or no on "did this pass?"

I use visual validation as the clearest example of what happens when this breaks down. Visual diff tools have been around for years. You run your UI test suite, it detects that text changed somewhere, and it surfaces a result. Not a pass. Not a fail. Something changed. A human has to go look and decide: were those intentional changes, or not? The tool's only truly useful mode is when you're expecting zero changes and it confirms that expectation. Otherwise, it's requesting human judgment to resolve an open question.

That works fine in a traditional test suite when humans are the ones acting on CI feedback. It breaks in an agentic workflow. In a standard automated pipeline, the agent has no way to go look. It sees green or red, and self-corrects on red. It cannot process yellow. (A fully agentic setup with computer use changes this -- an agent that can operate a browser can, in principle, evaluate a visual diff the way a human would. But that's a human-in-the-loop replacement, not a guardrail. A guardrail is something that runs automatically, produces a deterministic signal, and doesn't require the agent to exercise judgment about its own output.)

If a check in your pipeline can only be resolved by a human looking at it, that check is a human gate. Label it as one, put a human on the other side of it, and don't expect an agent to self-correct from it.

What Belongs in the PR Feedback

The specific checks matter less than the consolidation and clarity. But in a well-configured agentic CI setup, every PR should get automated feedback on at minimum:

Build and unit tests. The fastest checks, the first to run, and the gating condition for everything else. If the code doesn't compile or unit tests fail, nothing else runs.

Integration tests. The real validation that services can talk to each other and that the system behavior is correct, not just the individual units.

Linting and style. Not optional niceties -- style violations create noisy diffs, confuse future agents reading the code, and accumulate technical debt fast when hundreds of PRs are landing. Auto-fixable style issues should be auto-fixed in CI rather than flagged as failures. Non-auto-fixable ones should surface as clear, specific comments: "line 47 violates naming convention X because Y."

Security scanning. Automated dependency audit and static analysis. New dependencies need to pass, known vulnerabilities need to be flagged. This is non-negotiable in a high-velocity environment where agents are adding packages regularly.

Coverage gate. Not a strict percentage, but a binary: did the PR include tests? Untested code from an agent is the same problem as untested code from a human, just more of it.

The PR as a Conversation

One mental model that's helped me think about CI in agentic workflows: the PR is a conversation between the agent and your engineering standards. The agent makes a proposal. CI responds with structured feedback. The agent revises. This loop continues until the proposal passes, then a human reviews the passing result rather than the raw output.

The human's job shifts. Instead of reviewing code to find issues that CI missed, the human is reviewing a code change that CI has already validated -- and asking higher-order questions: does this solve the right problem, does the approach make sense, are there architectural implications CI isn't equipped to catch?

This is a better use of human judgment than catching lint errors or checking whether tests run. But it only works if CI is doing its job completely. A human who has to catch what CI missed is a human doing CI's job at human speed.

The Feedback Loop You're Not Running Yet

Most engineering teams have CI. Fewer have CI that's been specifically configured to work well with agents. The gap is usually in three places: error message quality (too vague), check consolidation (too scattered), and flakiness (too unreliable to trust).

These are all solvable problems. Better error messages are largely a one-time investment per check. Consolidation is a configuration decision. Flakiness is the ongoing work described in the testing post earlier in this series.

The teams that will move fastest in the next twelve months are the ones that treat their CI pipeline not as plumbing, but as the primary feedback mechanism for an automated workforce. Build it accordingly.

What This Looks Like When It's Working

The teams I see getting the most out of agentic development have taken this one step further: they've built specialized agents into the CI loop itself.

Not just one agent writing code and waiting for CI to respond -- a system of agents checking each other's work. A test expert agent that reviews PRs for coverage gaps and surfaces specific risk: "you added this flow but have no test for the error path -- here's what breaks if it fails in production." A DevOps agent that flags potential rollout issues before they land: "this change modifies a shared config value -- here are the three services that will be affected on deploy."

These agents don't replace the CI checks. They extend the feedback loop into territory that static analysis can't reach -- the gap between "the tests pass" and "this is safe to ship." That gap has always existed. Human reviewers used to cover it by instinct. Now you can cover it programmatically, which means it gets covered consistently instead of depending on who's on rotation.

The CI pipeline as manager isn't just a metaphor. In a well-built agentic system, the manager has a staff.

This is post 5 of 7 in The Boring Parts Matter: Engineering Fundamentals for the Agentic Era.